At last year’s Airflow Summit, we shared how we built a multi-cluster orchestration layer on top of Apache Airflow to run ML workloads across multiple Kubernetes GPU clusters.

Once hundreds of ML engineers started running GPU pipelines in production, we discovered that orchestration alone is not enough. Operating multi-cluster GPU infrastructure introduces new challenges: controlling GPU allocation across teams, observing pipelines across clusters, and helping users run workloads efficiently without wasting expensive GPU resources.

In this talk, we’ll show how our Airflow platform evolved from a workflow orchestrator into an operational control plane for GPU infrastructure. We’ll cover custom scheduling strategies that dynamically route workloads across clusters using Airflow policies and resource awareness, integration with HAMI to improve GPU utilization, and AIOps workflows with KeepHQ that detect underutilized CPU, RAM, and GPU resources. We’ll also present powerful dashboards and AI-assisted tools that reduce Time2Market and simplify debugging while keeping infrastructure complexity hidden.

We’ll be happy to share how our platform continues to evolve with Apache Airflow.

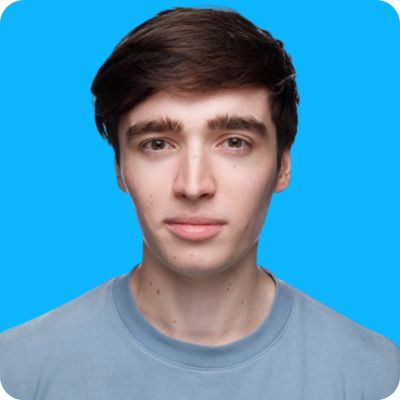

Aleksandr Shirokov

Team Lead MLOps Engineer, WildBerries

Tarasov Alexey

Wildberries, Senior MLOps Engineer